Every care home in England generates them. Falls, medication errors, near-misses, unexplained injuries: each one triggers a form, a signature, a file. And in too many services, that is precisely where the process ends. The incident report is completed, the box is ticked, and the learning evaporates. The question worth asking in 2026 is not whether care providers are recording incidents. Most are. The question is whether any of it is actually making care safer.

A System Built for Compliance, Not Learning

The architecture of incident reporting in adult social care was designed, largely, to satisfy regulators. The CQC expects evidence that incidents are recorded, investigated, and acted upon. Providers respond accordingly: they build paper trails. What they do not always build is a culture in which frontline staff feel safe to report, managers have the tools to analyse patterns, and leadership acts on what the data reveals.

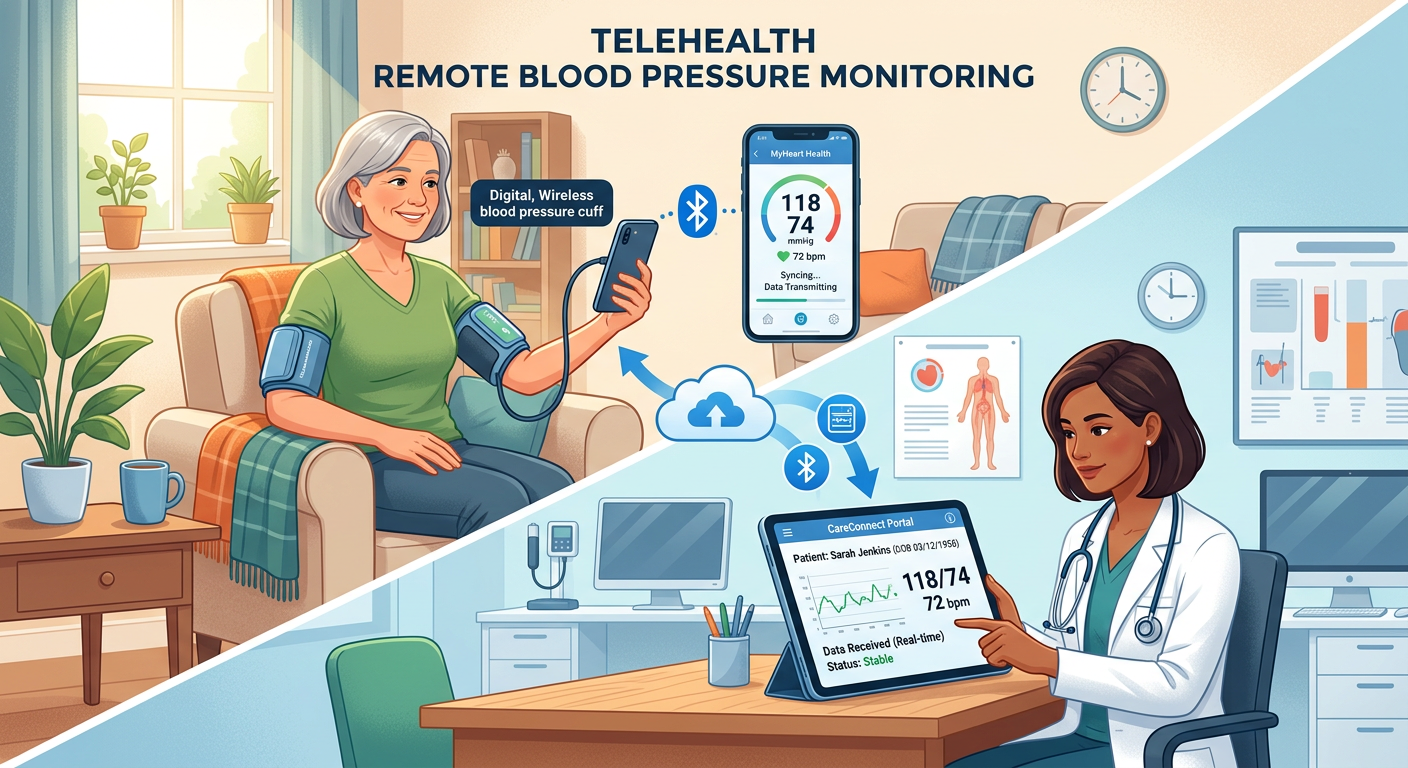

Digital incident and accident reporting systems have been available to the sector for years. Platforms integrated within broader care management software allow staff to log incidents on a mobile device at the point of care, trigger automatic notifications to managers, and feed data into dashboards that surface trends over time. The technology is not new. The adoption, however, remains patchy, and the gap between having a digital system and using it well is wider than most providers would care to admit.

The Reporting Gap Nobody Talks About

Underreporting is the sector’s open secret. Research consistently shows that care staff, particularly those working under pressure in under-resourced services, make calculated decisions about what is worth reporting. A minor fall with no visible injury. A resident who nearly choked but recovered quickly. A medication that was given thirty minutes late. These incidents frequently go unrecorded, not because staff are negligent, but because the reporting process is burdensome, the consequences feel disproportionate, and the feedback loop is non-existent.

Digital systems can reduce the friction of reporting. A well-designed mobile interface takes less time than a paper form. Structured fields prompt staff to capture the right information. Automatic escalation means managers are alerted without relying on a handover conversation that may never happen. But technology cannot fix a culture in which staff fear blame more than they value transparency. If the response to every reported incident is a disciplinary conversation rather than a learning discussion, the reporting rate will fall regardless of how slick the software is.

Data Rich, Insight Poor

For providers who have made the transition to digital reporting, a different problem emerges. The data exists; the analysis does not. Incident logs accumulate in dashboards that nobody has time to interrogate. Trend reports are generated monthly and reviewed quarterly, if at all. A care home recording fifteen falls in a single corridor over three months has, in theory, everything it needs to investigate the cause. In practice, the connection between the data and the action is rarely made with the rigour the situation demands.

This is partly a capacity problem. Registered managers are stretched. The expectation that they will also function as data analysts, identifying patterns across incident categories, cross-referencing with staffing levels, shift times, and individual resident profiles, is unrealistic without dedicated support or genuinely intelligent software. Some platforms are beginning to offer automated pattern detection and predictive flagging. These features are promising. They are also, in most services, switched off or ignored.

The Regulatory Incentive Is Pointing the Wrong Way

There is a structural problem at the heart of this. The regulatory framework, for all its stated commitment to learning cultures, still creates incentives that work against honest reporting. A provider with a high incident reporting rate may attract scrutiny, even when that rate reflects a healthy, transparent culture rather than a dangerous one. A provider with suspiciously low reporting may sail through an inspection if the paperwork looks clean.

The CQC’s single assessment framework places greater emphasis on learning from incidents, and that shift is welcome. But the sector will not change its relationship with incident data until the message is unambiguous: more reporting, done well, is a sign of strength. Providers who surface near-misses and act on them should be recognised for it, not treated as organisations with more problems than their quieter peers.

What Good Actually Looks Like

The providers getting this right share a few characteristics. They have invested in digital reporting tools that are genuinely easy to use at the point of care. They have built a culture in which reporting is expected, normalised, and met with curiosity rather than blame. They review incident data in regular, structured team meetings where frontline staff are part of the conversation. And they close the loop: when a pattern is identified and an action is taken, staff are told what changed and why.

None of this requires the most expensive software on the market. It requires leadership that treats incident data as a strategic asset rather than a compliance obligation. The technology is a tool; the culture is the intervention.

The sector has spent years arguing that it needs better data to make the case for investment, reform, and recognition. The incident data already exists. The harder question is whether anyone is actually using it.